Last updated at Wed, 07 Apr 2021 18:35:36 GMT

This post is the twelfth in a series, 12 Days of HaXmas, where we usually take a look at some of more notable advancements and events in the Metasploit Framework over the course of 2014. As this is the last in the series, let's peek forward, to the unknowable future.

Happy new year, it's time to make some resolutions. There is nothing like a fresh new year get ones optimism at its highest.

Meterpreter is a pretty nifty piece of engineering, and full of useful functionality. The various extensions and delivery mechanisms can do amazing things that I am still trying to wrap my head around. But, there are other, more fundamental, parts of Meterpreter's design that are showing their age. The primary problems I have run into are that it's not terribly efficient and still a little unpredictable when stressed, the POSIX meterpreter is pretty tricky to build and leads to a lot of frustration, and testing is a somewhat manual, tribal-knowledge sort of process. Due to the high degree of integration between Meterpreter, its wire protocol (TLV) and Metasploit Framework, it can be difficult to make sure that all of the bells and whistles keep working, either in isolation or together, and difficult to track down the cause of a problem.

Luckily, my job is to make all of these things better this coming year! To this end, I have been spending my first month at Rapid7 experiencing all of the pain and joy of Meterpreter development. At the same time, I have been hatching plans to make Meterpreter an even more amazing tool. As part of this effort, I started working on an experimental tool that I'm calling 'mettle'. Mettle means to deal with difficulties in a spirited and resilient way. There also happens to be a cool looking Marvel comic book character. It is currently just something I am working on to scratch my second-system itch, but it tries to solve a few real problems with Meterpreter's design today.

If they can build it, they will come

First up, the POSIX build system for meterpreter has not seen as much love as the Windows version. Its understandable: working on Makefiles is not very sexy work, and there is nothing like a lot of technical debt in a build system to sap one's spirits. But, they are important! So, the first leg of my project is to put together a build system that can work with multiple architectures, with cross compilation and readability in mind. Rule number one is to require nothing fancy, just GNU make. Everything else can be built or bootstrapped. This is my top-level make file so far:

PROJECT_NAME:=mettle

ARCH=i386

TARGET:=i386-linux-eng

include scripts/make/Makefile.common

include scripts/make/Makefile.libev

include scripts/make/Makefile.libpcap

include scripts/make/Makefile.libressl

include scripts/make/Makefile.libtlv

include scripts/make/Makefile.kernel-headers

include scripts/make/Makefile.tools

There is not a lot to see here - almost everything is in the include files. The point of this is to make the build system easier to understand by not including the kitchen sink in a single big file. This also makes merging changes from pull requests easier, since unrelated changes don't bleed into each other. Want to add a new library? It's one line here, and all of the goo goes somewhere else. Experimenting with several of the older PRs for meterpreter, all of them conflicted heavily with the big main Makefile, leading to a merge nightmare. Separated-out code is easier to merge and test, and helps prevent bit-rot.

The other thing to note is that there is an addition of a 'tools' and a 'kernel-headers' makefile. I'm working on building everything with a cross-compiler, notably the ELLCC compiler with the musl C library. ELLCC is an interesting project because it provides a single toolchain that not only targets many different platforms, but itself runs on those same platforms and more. Musl is a C library focused on security and simplicity. It also happens to still support Linux 2.4 kernels, which makes it interesting for 'run everywhere' software like Meterpreter. By using a cross compiler, builds on my Ubuntu 14.04 system can generate the same binaries as your Fedora 20 system. But, you could also build them on OS X or Windows and get the same results. It also makes it possible to target other architectures and platforms later. I have an outer driver script that looks like this, just to keep things honest:

#!/bin/sh

for i in armeb-linux-engeabi armeb-linux-engeabihf \

arm-linux-engeabi arm-linux-engeabihf \

mipsel-linux-eng mipsel-linux-engsf \

mips-linux-eng mips-linux-engsf \

ppc-linux-eng \

i386-linux-eng x86_64-linux-eng; do

echo Building target $i

make TARGET=$i;

done

Each of the builds is isolated into its own build tree, so you can keep them around and switch between them without having to 'make clean' first:

~/projects/mettle$ ls builds/ armeb-linux-engeabi armeb-linux-engeabihf i386-linux-eng kernel-headers-3.12.6

~/projects/mettle$ ls builds/i386-linux-eng/

include lib libev libpcap libressl share

I'm also keeping the source tarballs for the build in a separate repository to provide both a fast and reliable mirror for upstream sources. This removes dependency on upstream sources that may move, become unavailable, or might even be replaced.

~/projects/mettle/deps$ ls ellcc-Mac_OS_X_10.9.5-0.1.6.tar.gz kernel-headers-3.12.6.tar.xz libpcap-1.6.2.tar.gz ellcc-x86_64-linux-eng-0.1.6.tgz libev-4.19.tar.gz libressl-2.1.3.tar.gz

Separating out the pieces

I'm a big fan of separation of concerns and event-oriented programming. Modular code is simpler and easier to test because you can test each part in isolation. It also makes it easier to change one piece without affecting everything, because the interfaces are isolated and mockable. On that note, the core Meterpreter command dispatcher looks something like this (simplified, but not a lot):

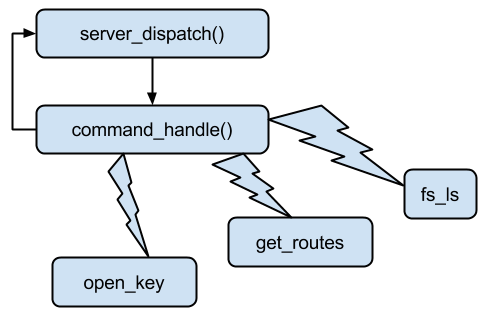

The server_dispatch function runs in a loop, receiving packets from the control socket. A 'packet' in this case is really just a header followed by a command. Each packet is passed to command_handle, which then scans a command table of strings to find a pointers to a function that knows how to parse and operate on this kind of packet. In most cases, a new thread is created by command_handle, which is passed the function pointer and the packet. The packets are then passed to the handler function, which may be built into Meterpreter or dynamically loaded as part of an extension (usually the later). While this design the listening socket from blocking while a command is dispatched, it means that a post module like windows/gather/enum_services translates into hundreds of threads spawned within Meterpreter. The lack of synchronization between these threads can also translate into stability problems, as a command sequence might be sent as 'open handle, close handle', but actually get executed in reverse.

This pass-through design also means that every layer of the stack has to understand the concept of a 'packet', how to parse it and how to generate responses directly back to the command socket. This makes it difficult to re-purpose the command handlers for a different API, like being hooked to a scripting language, or using a different type of wire format to communicate from the Metasploit framework.

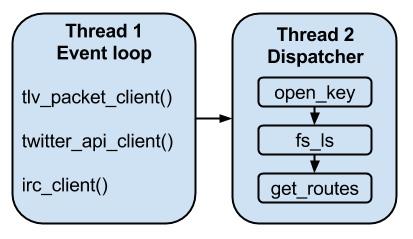

To this solve these problems, I am integrating an event loop into mettle's design that will separate the command packet parsing from the callbacks, and separates the execution of the command handler itself into a separate thread. This design has several advantages.

To this solve these problems, I am integrating an event loop into mettle's design that will separate the command packet parsing from the callbacks, and separates the execution of the command handler itself into a separate thread. This design has several advantages.

First, there only needs to be two threads at minimum - one to handle command ingress and egress, and another to actually execute the commands. More command dispatchers could be added for multiple channels into mettle.

Second, the packet parsing and generation will be separated out from the actual API calls. This will allow using the APIs from other sources, such as a built-in scripting language perhaps, or a different command format than TLV if desired.

Third, it allows commands to be run sequentially and synchronously without blocking the communications channels. Commands could even continue executing in the event of a disconnect from the command channel back to the Metasploit framework, and this design would be able to continue without any issues. It would behave similarly to how command dispatch works within the framework itself, supporting the concepts of queued commands and retries as well.

Designing for testability

I'm tackling this on two fronts. On one hand, Meterpreter has some lovely test cases already in the form of the post/test/ modules. Unfortunately, these probably are not run as frequently as they should be, and are not part of our continuous integration tests. As such, they have had a little bit rot. But, I have been working on cleaning these tests up and fixing issues in Meterpreter that prevent these tests from passing today. I have also been working in Jenkins and vagrant to provision fresh victim VMs on the fly, run rc scripts with the Metasploit. Tests are nice, automated tests are awesome!

I'm tackling this on two fronts. On one hand, Meterpreter has some lovely test cases already in the form of the post/test/ modules. Unfortunately, these probably are not run as frequently as they should be, and are not part of our continuous integration tests. As such, they have had a little bit rot. But, I have been working on cleaning these tests up and fixing issues in Meterpreter that prevent these tests from passing today. I have also been working in Jenkins and vagrant to provision fresh victim VMs on the fly, run rc scripts with the Metasploit. Tests are nice, automated tests are awesome!

Hopefully, it will not take too long to get all of these tests passing reliably with Meterpreter, and I these tests have already found some interesting bugs, such as this.

Because Meterpreter is already largely built as shared library objects, this is actually an ideal setup for unit testing. One 'simply' links a test harness executable to the libraries and away you go. However, there is a problem as hinted at above: the actual API calls parse and return Packets directly, rather than implementing a C calling convention. The more modular design of mettle should help this by giving the standard API calls a real function signature that can be tested directly, so the transport parsing, dispatch and APIs themselves can be tested separately.

This will also allow mocking up the API backend itself, to make concepts like record and playback meterpreter sessions for testing Metasploit post modules possible without a backing VM. If not anything else, 2015 should be the year of tests!

Onward, onward

There is a lot more work to do on mettle, so things may change over time. I envision it possibly replacing or living along-side the POSIX Meterpreter first, since the API breadth is lower there compared to the Windows version. It might simply live along-side Meterpreter in general, since it would be nice to have something that could be used for research and development without as much worry about breaking things in the short term. I'm hope your mind is also racing like mine, thinking of other uses for an event loop and disconnected command queue, like 'sleep' modes, scriptability, covert channels and similar. But for now, I am continuing with the basics of getting it as testable, buildable, efficient and reliable as possible.